Reading Time: 5 mins.

What is Docker?

Docker, a software offered as a set of PaaS (Platform as a Service) products primarily known for running multiple-operating systems on top of the host’s operating system. It uses the container-based technology to pack up the libraries, dependencies and other configuration files and thereby to deploy as a single package. The containers are separated from another and the interactions between them are made via well-defined channels. Such secluded containers and ease of deployment are the key benefits of Docker which in turn enables developers to run multiple applications on the provided host or sometimes directly running the containers within the host environment itself.

What is Virtualization?

Now that you have seen what Docker is, you might have wondered that this is what we do using Virtualization. Aren’t we? Yes, we do, and this is because Docker itself is another form of virtualization. To understand the difference between the two, and to know what are the factors that stand out Docker from Virtualization, you need to know what Virtualization is in first place.

Virtualization simply means an act of emulating a computer system including hardware platforms, storage devices and network resources that typically runs on top of the host operating system. Apart from this OS-level virtualization, the other two types of virtualization are full virtualization and para virtualization. The main aim of virtualization is to manage the workloads and to improve the scalability. It does this by radically transforming the traditional computing environment.

Why do we go with Docker?

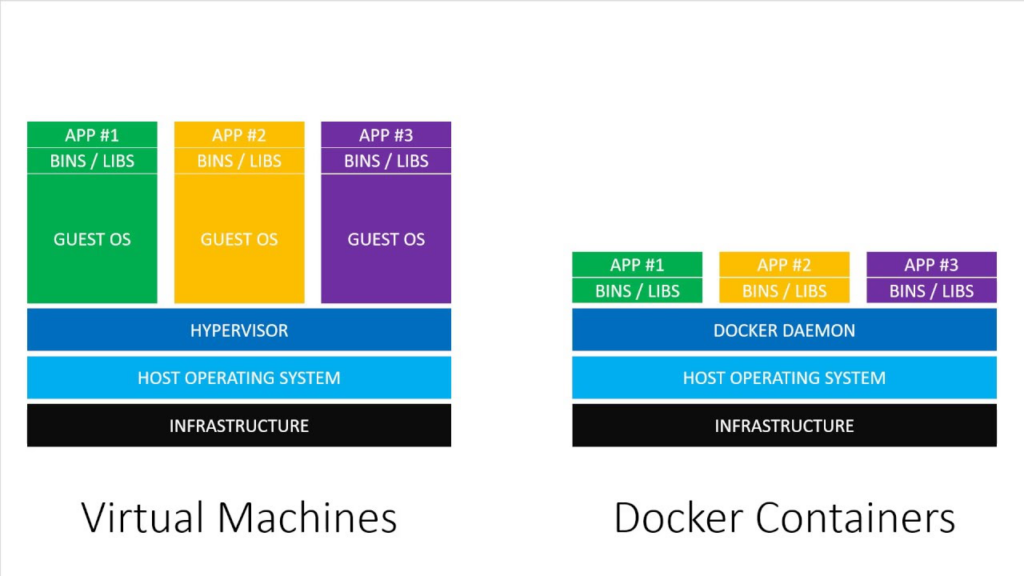

Unlike Virtualization, Docker uses only fewer resources as it performs on system-level using its container approach, called Docker Containers. It successfully addresses the shortcomings of Virtualization such as the slow deployment services, unstable performance, security risk, scalability issue and so forth.

The applications’ specific binaries, libraries and other dependencies are run on the host kernel which in turn results in lightweight and faster execution in comparison with VMs. Further, the containers are separated from one another hence there are no security and data loss issues. At the same time, it can share the necessary libraries and resources whenever required as the containers utilize the host OS. To know more about Docker and its features, go to https://www.docker.com/

Virtualization Architecture vs Docker Architecture :

Over the years, Virtual Machine has been the best go-to option for so many organizations especially when it comes to cloud infrastructure. Yet, there is one thing which carries over and surpasses the advantages of VMs in terms of lightweight architecture, economical benefits and scalability, it is indefinitely Docker.

Docker works by using its container-based technology that focuses on improving the scalability of the environment via distributed applications. Here, in the below section, you will get to know the differences between Docker containers and virtual machines.

Difference between Virtualization and Docker:

As mentioned earlier, the purpose of both Virtualization and Docker is the same, it helps us to maintain and manage workloads via scalability. Yet, the question is which one of these aids us in achieving the results to the fullest. Let’s try to understand the pros and cons of both using a comparison table below.

| S. No. | Virtualization | Docker |

|

1. | Slow deployment service and boot-up takes up to a minute. | Faster execution and boots-up within fraction of a second. |

|

2. | Data back-up at high-risk. | Ability to rollback. |

|

3. | Made up of both the user space and kernel space of the OS. | Made up of only the user space of the OS. |

|

4. | Utilizes the hardware resources of the host OS. | Just another process from the rest of the system. |

|

5. | Demands complete OS for each workload . | Capability to run multiple workloads within one OS. |

|

6. | Resource intensive and makes use of hypervisor. | Reduced IT management resources and makes use of Docker engine. |

Docker Terminologies:

Docker Image

It is a source code consisting of multiple elements such as code, libraries, config files, environment and system variables. If deployed, then the Docker image can be executed as a Docker container (one or multiple instances). Being immutable, the image can be shared, deleted, duplicated and therefore, reusable. It is portable and can act as an intermediate between other Docker functions.

Docker File

In order to assemble an image to build, a docker file is created and used. It is a simple text document that contains all the necessary commands a user could call out along with the information on how to build images. Further, by using Docker build, an automated build that performs various command-line instructions can be created.

Docker Compose

Docker Compose is a YAML file that is used to define services, networks and volumes. It does this by running multiple containers as a single service. For example, creating a single file to start both the Nginx and MySQL containers as a service without the need to start each one individually. You can use either .yaml or .yml extension for the compose files.

Docker Containers

The purpose of a container is to pack up all the code, dependencies, system tools, libraries, settings, etc. so that an application can be run quickly and reliably from one computing environment to another. In simple words, the Docker containers are lightweight, standalone and executable packages of a software that are isolated from one another but communicate with each other via a well-defined channel.

Docker Engine

The Docker Engine is the one responsible for building, shipping and running the container-based applications. Being a client-server application, it works by creating a long-running program known as daemon process and hosts images, storage volumes, networks and containers.

Docker Daemon

Being one of the three components of Docker engine, the Docker daemon listens to the Docker API requests, and manages Docker builds such as images, files, networks and volumes. It is a server with a long running process dockerd that communicates with other daemons to manage Docker services.

Though the Docker daemon supports only Linux as it runs by depending on the Linux kernel features, there are also other ways to make it run on Windows and MacOS.

Docker Volumes

The Docker volume is used for persisting (to save and share) data in Docker containers and services. It is present outside the default (union file system) and performs as usual files and directories on the host filesystem.

Docker Registries

Docker Registries is where you store all your images. Two types of registries are possible, one is the public registry and the other is private registry. Anyone can use the public registry, and it is by default configured to look for the images on Docker Hub.

On the other hand, you can also run your private registry and pull/push the required images via Docker commands.

Docker Hub

One of the services provided by the Docker community and the sole purpose of the Docker Hub is to foster collaboration among your peers and ease of pull/push request. With Docker Hub, you can find and share container images with your peers and automatically build container images from popular repositories like Github and Bitbucket.

Docker DTR

Docker DTR stands for Docker Trusted Registry is one of the exclusive services provided by the Docker community. To maintain security and other regulatory compliance requirements, the Docker DTR is offered for enterprises to store and manage their Docker images either on-premise or in their configured VPC (virtual private cloud).

To know about the Docker basic commands, visit the following Docker official website, https://docs.docker.com/engine/reference/commandline/docker/

Conclusion:

As a quick sum-up, you have gone through what is Docker; how it works, why we opt Docker instead of Virtualization, what are the aspects and benefits that stand out Container technology from traditional VMs and so forth. Further, a list of important Docker terminologies have been explained for the benefit of Docker installation and usage. Need to install Docker on your system or want to switch form Virtualization to Docker, visit our blog post on How to Install Docker on Ubuntu 18.04 Apart from the Build Container and Docker Engine, there is one more technology for you to comprehend that is Container Orchestration. It is this Orchestration technology that is involved in hosting and managing the multiple containers, if you opt for running services in containers.